Are You Ready? Why Capability Intelligence Is Redefining Resilience

Most organizations have invested heavily in understanding risk. They have dashboards, frameworks, heat maps, and quarterly reports. They monitor geopolitical instability, cyber threats, third-party exposure, and regulatory change -often in real time.

And yet, when disruption arrives, too many organizations discover they weren't ready for it.

That gap between awareness and readiness is the central problem that capability intelligence is designed to solve. It was the focus of a recent webinar hosted by GRC 20/20's Michael Rasmussen, joined by iluminr co-founders Joshua Shields and Marcus Vaughan, and the conversation covered some of the most important shifts happening in risk and resilience right now.

You can catch up on the webinar here.

The Problem with Knowing the Storm Is Coming

Risk intelligence tells you something about the world outside your organization. Capability intelligence tells you something about the organization itself. Specifically, whether it can actually respond when things go wrong.

The distinction matters more than it might seem, as many organizations mistake documentation for demonstrated capability. They've completed the training, signed off the plans, run the annual exercise, passed the audit. These activities have value. But as Joshua put it plainly:

“Policies tell you what should happen, plans tell you what people intend to do. But neither tells you how people actually perform under pressure.”

This results in organizations that are, as Michael described, excellent historians but poor navigators. They've studied every past disruption in detail, but they're ill-equipped for the next one, which won't look the same.

The operating environment has also fundamentally changed. Geopolitical instability reshapes supply chains overnight. Cyber risk has evolved into an operational resilience question - not if an attack occurs, but whether services can continue when it does. Third-party ecosystems create layered dependencies that extend well beyond direct control. Risk doesn't arrive from one direction anymore; it converges from several at once, in ways that are hard to model from the outside looking in.

From Assertion to Evidence

For years, organizations could satisfy board and regulatory oversight by showing their artefacts: the framework, the plan, the risk register. There was an implicit assumption that if the documentation existed, the capability existed.

That assumption no longer holds.

Boards and regulators are increasingly asking not whether an organization has documented resilience, but whether it can demonstrate resilience. It's a significant shift from assertion to evidence. A board doesn't want to hear that resilience is green on a quarterly heat map. It wants to know whether critical services can continue under stress, whether third-party dependencies are fully understood, and whether recovery capabilities have been tested.

Capability intelligence is what makes that evidence possible. It gives leadership something they can point to: we've observed how our teams make decisions under pressure. We've identified where escalation breaks down. We know where our dependencies are likely to fail first. We understand the gap between what our people think will happen and what actually happens on the ground.

Where Microsimulations Come In

The most practical way to generate capability intelligence is through Microsimulations. These are short, scenario-based exercises, typically five to fifteen minutes, that put individuals in the middle of a disruption and ask them to make real decisions with limited information.

Unlike a traditional tabletop exercise, which tends to reach a small group of senior leaders once or twice a year, single player Microsimulations can be deployed to hundreds or thousands of people across every level of the organization - from executives to front-line staff - at a cadence that keeps pace with a changing threat environment. Each person works through a focused scenario independently: a vendor insolvency, a ransomware event, a regulatory shock, an extended outage. They assess the situation, weigh the options, and respond.

That independent, immersive format is what makes the data meaningful. Surveys measure self-perception. Microsimulations measure decisions under pressure. The responses are more considered, more revealing, and far less susceptible to the social dynamics that shape what people say in group settings.

Each simulation also delivers immediate, AI-driven feedback to the participant. So, the experience isn't just a measurement tool, but also a learning moment.

Over time, repeated simulations build a body of behavioural intelligence that's difficult to obtain any other way. They surface whether decision authorities are understood or just assumed, where escalation breaks down, where people are working from assumptions that don't match organizational reality and equally, where capability is strong.

What Capability Intelligence Measures

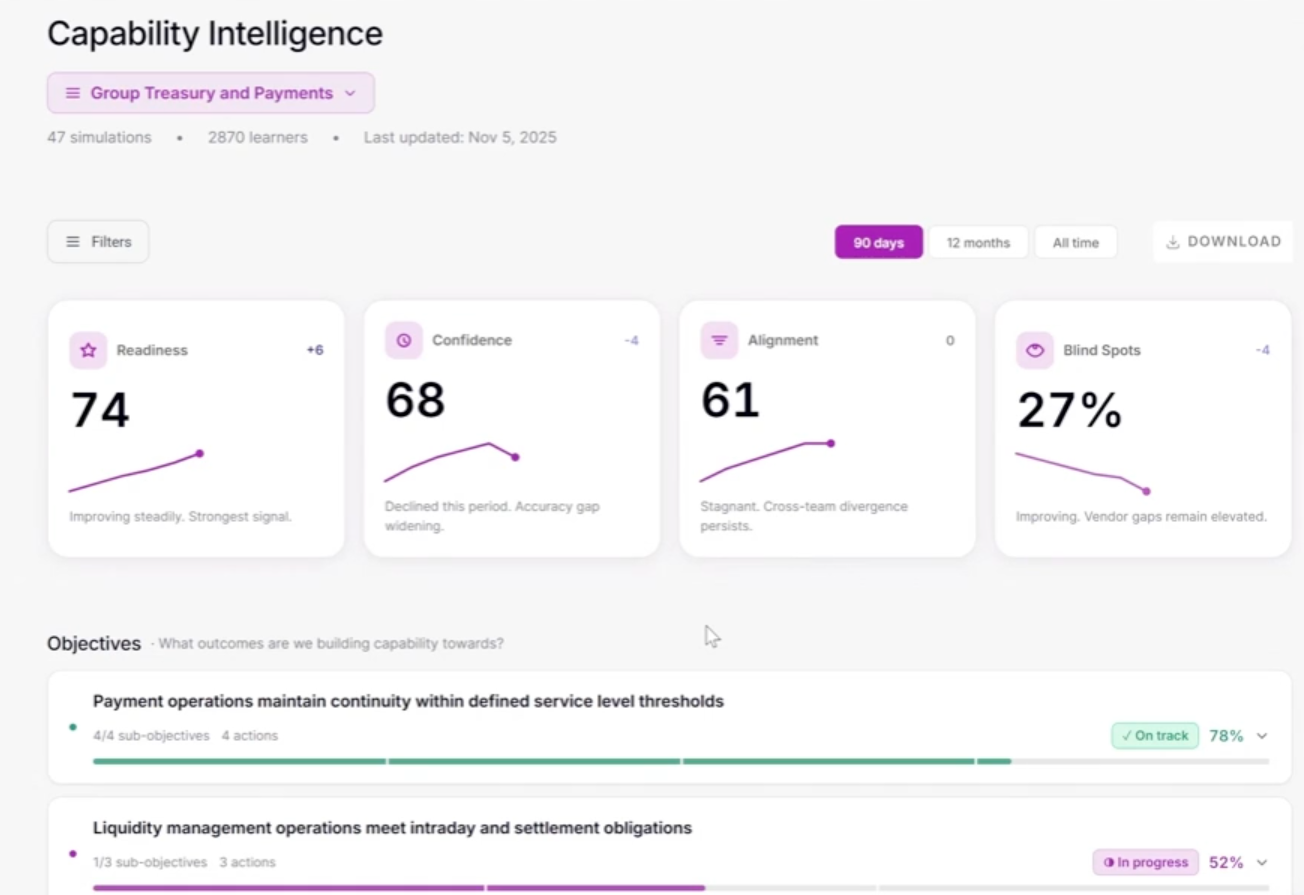

iluminr's platform surfaces capability intelligence across four key signals:

Readiness is the overarching measure. How well individuals, teams, and the organization, perform under pressure. It's not expected to reach 100%, and it fluctuates. That's the point. It's a live measure, not a snapshot.

Alignment tracks consistency in decision-making across the organization. When different teams respond very differently to the same scenario, that misalignment either needs to be understood or addressed.

Confidence measures how well people believe they're prepared - and crucially, it can be cross-referenced against actual readiness. Overconfidence is a risk in its own right. So is underconfidence in teams that are already performing well, but haven't been given the opportunity to see that.

Blind spots and coverage identify what's been tested, and what hasn't. What critical services have been simulated? What processes, applications, vendors? Where are the gaps that nobody has yet had visibility of?

These signals are observable at individual, team, divisional, and enterprise level. They can be tracked over time, connected to strategic objectives, and linked directly to improvement actions.

Resilience as a Continuous State

One of the many valuable framings from the webinar came from Michael, who described organizational resilience as analogous to biological homeostasis. Your body doesn't check its temperature once a quarter. It monitors continuously, adjusting in real time. That's what effective resilience management should look like - not periodic snapshots, but ongoing calibration.

Marcus put it a another way:

"Resilience is less like a destination you reach and more like health. There's no end state. What matters is whether you have the right ways to sense where your capability sits at any given moment, and whether you can act on that signal as the environment changes."

This is where iluminr's approach moves the conversation forward. By deploying microsimulations regularly, across the full breadth of an organization, the intelligence that comes back is current and continuous rather than historical and episodic. Risk happens between the assessments. Capability intelligence fills that gap.

What Enterprise Deployments Reveal

From organizations that have run top-to-bottom microsimulation programs, ie financial institutions testing from headquarters down to branch networks, large enterprises reaching thousands of employees, certain patterns emerge consistently.

Awareness of plans and playbooks is often strong. But when scenarios are extended beyond the first 24 to 48 hours, confidence deteriorates noticeably. Teams that knew what to do in the first phase start to wobble when contingency strategies need to be sustained for longer. Escalation pathways are frequently unclear. What leadership assumes will happen on the ground often doesn't match how front-line staff feel about their readiness to respond.

These aren't findings you could get from documentation review. They only emerge when you put the scenario in front of people. And consistently, one of the more surprising outcomes is that staff who go through operational-level microsimulations come away wanting more practice, not less. The experience creates its own appetite for improvement.

From Handbrake to Navigation System

The organizing principle of the webinar, and arguably the right frame for thinking about where risk and resilience management is heading, is the navigation metaphor. Risk and resilience shouldn't function as a brake on organizational progress. They should function as the navigation system: helping leadership understand the route, anticipate hazards, and reach the destination with fewer wrong turns.

Capability intelligence makes that possible in a practical way. It gives organizations something more useful than warnings. It gives them evidence-based insight into how they'll perform under stress, and that's the foundation for the kind of informed confidence that boards, regulators, and leaders need to make good decisions in a volatile world.

To see how this comes to life, you can experience the Vendor Insolvency: Third-Party Risk Microsimulation here.

.jpg)